Big Ideas, Real Impact.

Big Ideas, Real Impact.

This case study is written as a design log. Instead of presenting only the final idea, it documents the thinking process behind an AI-assisted footwear workflow: where the current process slows down, how generative tools like ComfyUI could be structured for designers, and how outputs move into review and 3D development.

ROLEGenerative AI Image

TOOLComfyUI ChatGPT CivitAI Meshy

FOCUSAI Workflow Experiment

Structuring AI for Footwear Design Exploration

This experiment suggests that generative AI is most effective when it supports the structure of the design process rather than replacing it. Instead of treating AI as a tool for producing random visual outputs, the workflow begins with clearly defined design intent, including silhouette direction, outsole language, and performance context. By establishing these elements before generation, the prompts guide AI toward results that remain aligned with the product vision and brand design language.

Within this approach, generative AI becomes a tool for accelerating exploration rather than substituting creative judgment. Designers can quickly test multiple variations, evaluate visual directions, and iterate with greater speed while still maintaining control over the design narrative. The process also improves communication across the team by keeping references, generated concepts, and design reasoning connected within a single workflow.

As a result, the workflow helps create a more efficient path from early concept to design review and eventual development. Instead of disconnected experiments, AI outputs become part of a guided exploration process that supports clearer decisions, faster iteration cycles, and stronger alignment between concept exploration and real footwear development.

01

Concept Direction

The project started with a design intention centered on equality and freedom. I wanted to express a world where people with physical disabilities and people without disabilities are seen with the same dignity, without distinction or limitation. The visual direction focused on a sense of urban freedom, movement, and confidence that goes beyond physical constraints. The shoe silhouette itself remained relatively basic and wearable, while the graphic details on the shoe were designed to feel more expressive, city-driven, and spontaneous, inspired by a painterly marker-like energy. This step was important because it defined not only the visual tone, but also the emotional message behind the concept.

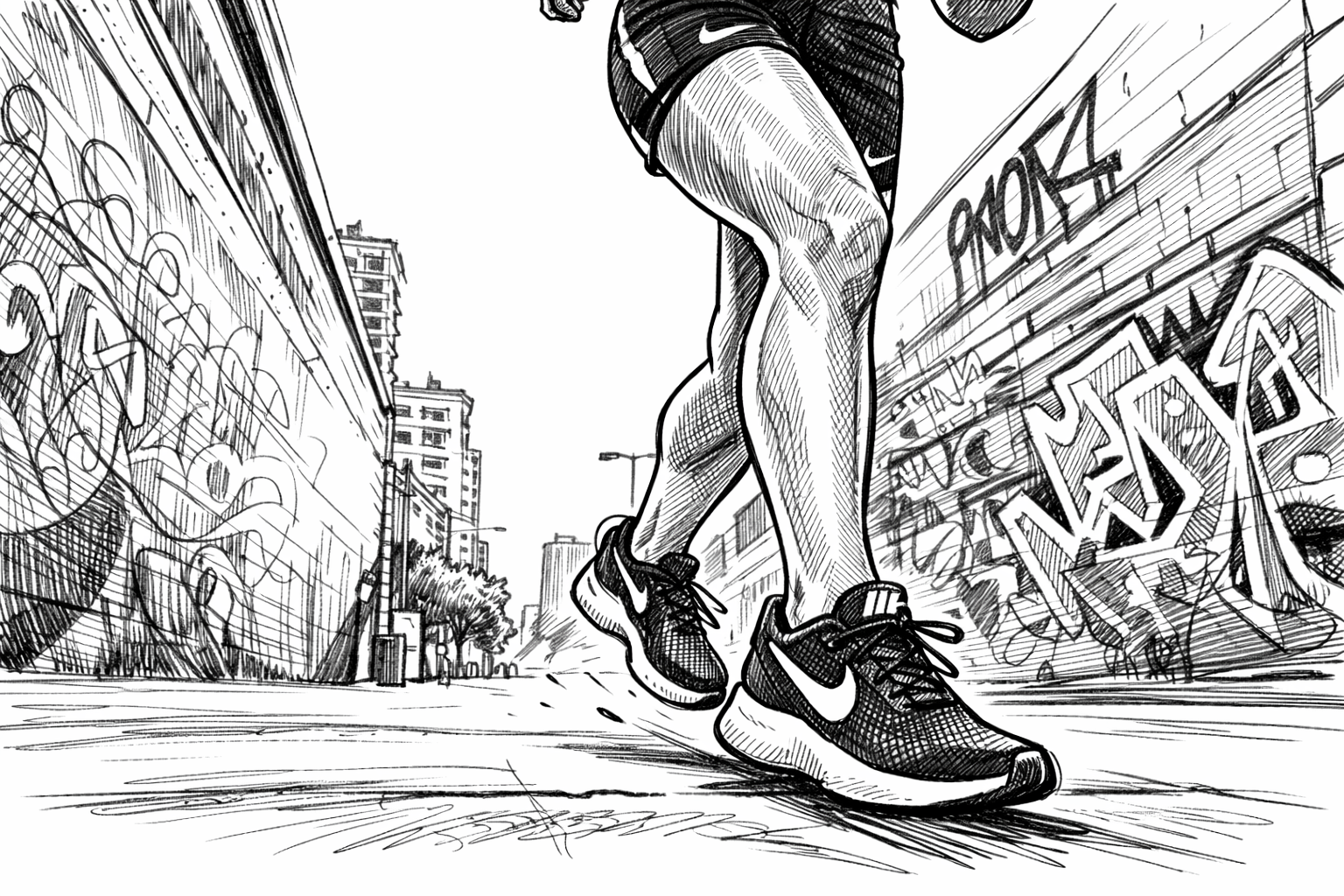

Research & Reference Exploration

02

In the second stage, I gathered references from the internet to establish both visual inspiration and design constraints. This included mood references for urban styling, movement, freedom, and inclusive representation, as well as footwear references for shape, proportion, sole structure, and detail direction. At the same time, I used ComfyUI to test simple footwear concepts and pose directions, which helped narrow down the visual language early. This stage worked as a bridge between idea and execution by defining what the design should feel like, what kind of pose would support the concept, and what visual elements should remain consistent throughout the workflow.

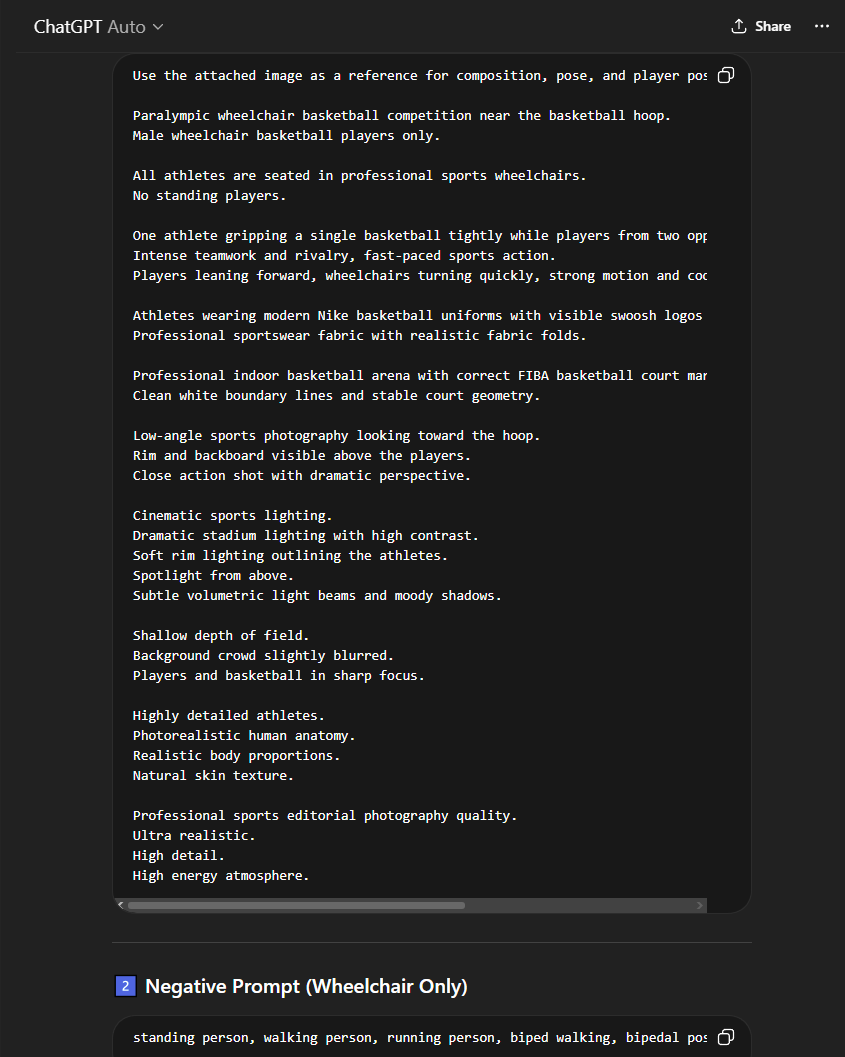

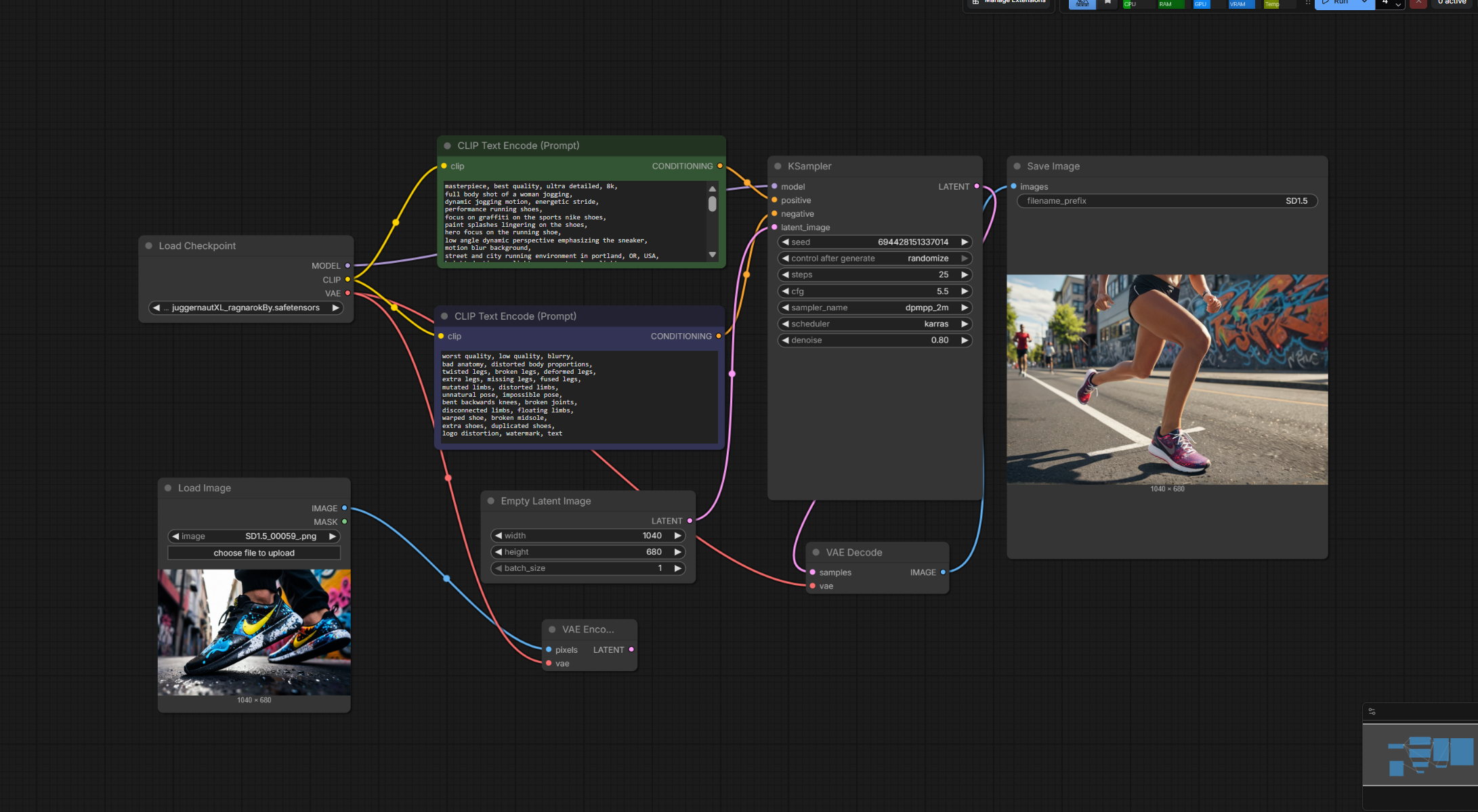

Prompt Iteration & AI Generation

03

In the third stage, I iterated continuously through ComfyUI and ChatGPT to improve prompts and generate stronger results. I used ChatGPT to refine wording, structure design intent more clearly, and make the prompts more consistent, while ComfyUI was used to repeatedly test visual outcomes. During this process, I adjusted settings such as denoise strength, CFG scale, and other workflow nodes to capture a more balanced result. The goal was not just to generate attractive images, but to find outputs that best matched the intended concept, including the shoe design, pose, mood, and inclusive message. This stage was essentially a cycle of prompt improvement, generation, comparison, and refinement until the direction felt visually convincing.

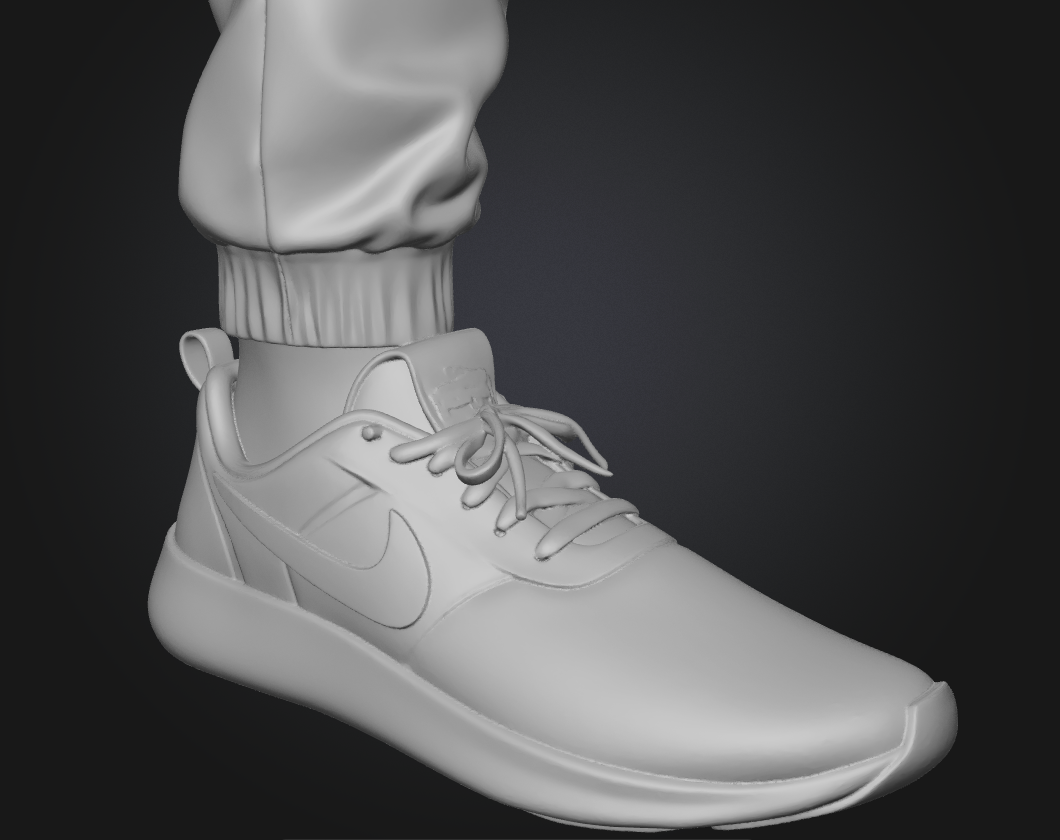

From Concept to 3D Exploration

04

In the final stage, I explored how the selected footwear concept could move into a 3D process by attempting an initial mesh interpretation of the design. This step focused on translating the 2D AI-generated concept into a more tangible form and identifying which parts of the design were structurally clear enough for 3D development and which parts needed further clarification. It also revealed gaps between visual concept generation and production-ready form, such as proportion accuracy, sole construction logic, and material definition. This stage was valuable because it showed that the workflow was not only about image generation, but also about how a concept could evolve toward a more realistic development pipeline. In a full production environment, this step could support collaboration with 3D designers, prototyping teams, or footwear developers.